Transpose matrix4/25/2023

Matrix addition and subtraction are done entry-wise, which means that each entry in A B is the sum of the corresponding entries in A and B. Two matrices can only be added or subtracted if they have the same size. Therefore, we have constructed a full-rank set of eigenvectors of A, meaning that it is diagonalizable.Addition and subtraction This shows that if x is an eigenvector of $A^TA$ then it is also an eigenvector of $A^2$, which in turn means it is an eigenvector of $A$ since powers of matrices share the same eigenspace. While the notation is universally used in quantum field theory, is commonly used in linear algebra. Unfortunately, several different notations are in use as summarized in the following table. Applying A to both sides of this equation, we have $$A^2x=A^TAx=\lambda x$$. The conjugate transpose is also known as the adjoint matrix, adjugate matrix, Hermitian adjoint, or Hermitian transpose (Strang 1988, p.

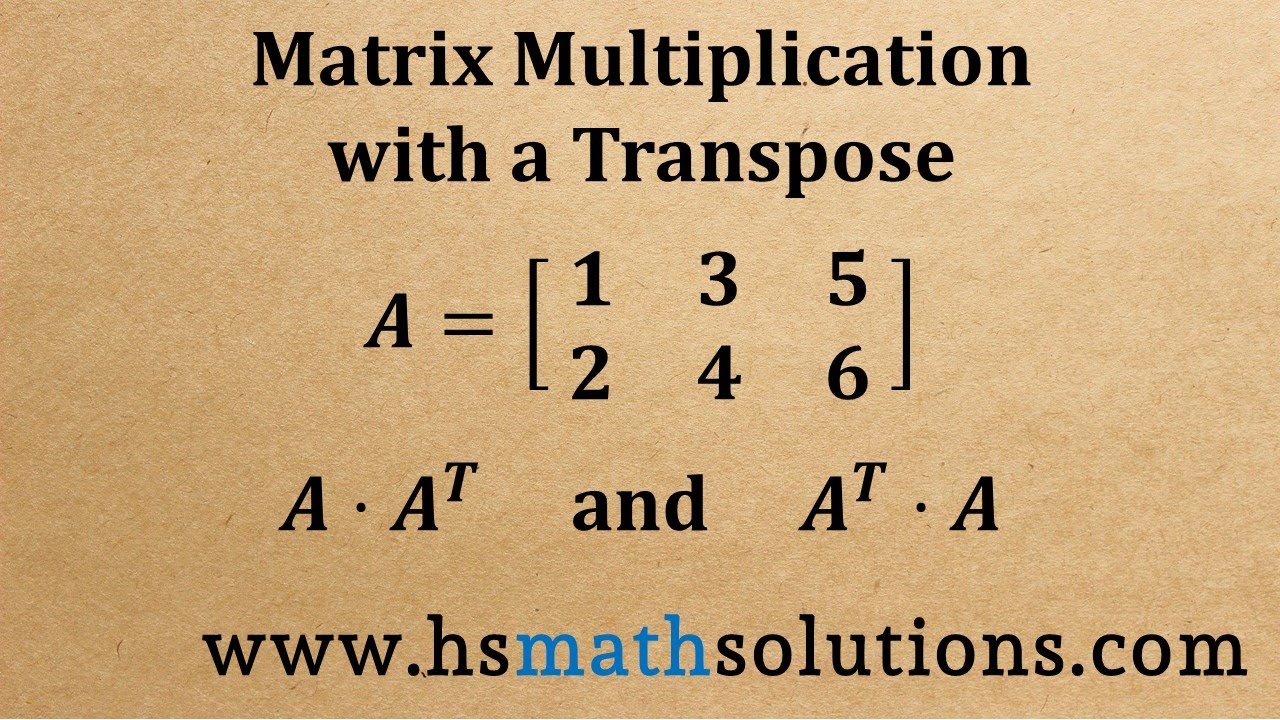

Subtracting these two equations, we have $$((A^T-A)x,(A^T-A)x)=0,$$ which by the definition of inner product implies that $$(A^T-A)x=0 =>A^Tx=Ax.$$ Note that of course this is true whenever $A=A^T$ if A is symmetric, but is a more general condition, since x is an eigenvector, rather than an arbitrary vector. For example, if A is the given matrix, then the transpose of the matrix is represented by A’ or AT. The transpose of the matrix is denoted by using the letter T in the superscript of the given matrix. Then $$(Ax,Ax)=(A^TAx,x)=\lambda(x,x)$$ and similarly $$(A^Tx,A^Tx)=(AA^Tx,x)=\lambda(x,x)$$ where I have used the normality of A. Transpose of a Matrix Definition The transpose of a matrix is found by interchanging its rows into columns or columns into rows. To see that $A$ normal implies $A^TA$ is diagonalizable, let $\lambda$ be a eigenvalue of $A^TA$ corresponding to the eigenvector x. As an obvious special case, $A$ is normal if $A$ is Hermitian (symmeric in the real case).

In this case, we have that $A$ is diagonalizable. Note this is a stronger condition than saying that $A^TA$ is symmetric, which is always true. Something that occurred to me while reading this answer for help with my homework is that there is a pretty common and important special case, if the linear operator A is normal, i.e. Least Squares methods (employing a matrix multiplied with its transpose) are also very useful withĪutomated Balancing of Chemical Equations How can we use this routine for inverting an arbitrary matrix $A$ ?Īssuming that the inverse $A^\right] Suppose that we have a dedicated matrix inversion routine at our disposal, namely for a matrix $A$ Another interesting application of the specialty of $A^TA$ is perhaps the following. Any employment for the Varignon parallelogram?ĮDIT.What is the difference between Finite Difference Methods,įinite Element Methods and Finite Volume Methods for solving PDEs?.Solving for streamlines from numerical velocity field.Solution: and the transpose of the sum is: The transpose matrices for A and B are. Example 2: If and, verify that (A B) T A T B T. Solution: The transpose of matrix A is determined as shown below: And the transpose of the transpose matrix is: Hence (A T) T A. Scale vector in scaled pivoting (numerical methods) Example 1: Find the transpose of the matrix and verify that (A T) T A.

Special? Yes! The matrix $A^TA$ is abundant with the Least Squares Finite Element Method in Numerical Analysis: Note, that the resulted eigenvectors are not yet normalized. Now, the originally searched eigenvectors $v_i$ of $AA^T$ can easily be obtained by $v_i:=Au_i$. Pre-multiplying both sides of this equation with $A$ yields It is arranged in the form of rows and columns which is called its order. In case $A$ is not a square matrix and $AA^T$ is too large to efficiently compute the eigenvectors (like it frequently occurs in covariance matrix computation), then it's easier to compute the eigenvectors of $A^TA$ given by $A^TAu_i = \lambda_i u_i$. What is the transpose of a matrix A matrix is basically a rectangular array of elements. The eigenvector decomposition of $AA^T$ is given by $AA^Tv_i = \lambda_i v_i$. Moreover, we can infer the eigenvectors of $A^TA$ from $AA^T$ and vice versa. That the rank is identical implies that the number of non-zero eigenvectors is identical. The function returns the transpose of the supplied matrix. Indeed, independent of the size of $A$, there is a useful relation in the eigenvectors of $AA^T$ to the eigenvectors of $A^TA$ based on the property that $rank(AA^T)=rank(A^TA)$. To find transpose matrix of a given matrix in R, call t () function t for transpose, and pass given matrix as argument to it. The rest of the eigenvectors are the null space of $A$ i.e. $AA^T$ is positive semi-definite, and in a case in which $A$ is a column matrix, it will be a rank 1 matrix and have only one non-zero eigenvalue which equal to $A^TA$ and its corresponding eigenvector is $A$.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed